“I’m just very curious—got to find out what makes things tick… all our people have this curiosity; it keeps us moving forward, exploring, experimenting, opening new doors.” – Walt Disney

One word at a time. It is like a stream of consciousness. Actions, objects, colors, feelings and sounds paint across the page like a slow moving brush. Each word adds to the crescendo of thought. Each phrase, a lattice of cognition. It assembles structure. It conveys scenes. It expresses logic, reason and reality in strokes of font and punctuation. It is the miracle of writing. Words strung together, one by one, single file, transcending and preserving time and thought.

I love writing. But it isn’t the letters on the page that excite me. It is the progression of thought. Think about this for a moment. How do you think? I suspect you use words. In fact, I bet you have been talking to yourself today. I promise, I won’t tell! Sure, you may imagine pictures or solve puzzles through spatial inference, but if you are like me, you think in words too. Those “words” are likely more than English. You probably use tokens, symbols and math expressions to think as well. If you know more than one language, you have probably discovered that there are some ways you can’t think in English and must use the other forms. You likely form ideas, solve problems and express yourself through a progression of those words and tokens.

Over the past few weekends I have been experimenting with large language models (LLMs) that I can configure, fine tune and run on consumer grade hardware. By that, I mean something that will run on an old Intel i5 system with a Nvidia GTX 1060 GPU. Yes, it is a dinosaur by today’s standards, but it is what I had handy. And, believe it or not, I got it to work!

Before I explain what I discovered, I want to talk about these LLMs. I suspect you have all personally seen and experimented with ChatGPT, Bard, Claude or the many other LLM chatbots out there. They are amazing. You can have a conversation with them. They provide well-structured thought, information and advice. They can reason and solve simple puzzles. Researchers agree that they would probably even pass the Turing test. How are these things doing that?

LLMs are made up of neural nets. Once trained, they receive an input and provide an output. But they have only one job. They provide one word (or token) at a time. Not just any word, the “next word.” They are predictive language completers. When you provide a prompt as the input, the LLM’s neural network will determine the most probable next word it should produce. Isn’t that funny? They just guess the next word! Wow, how is that intelligent? Oh wait… guess what? That’s sort of what we do too!

So how does this “next word guessing” produce anything intelligent? Well, it turns out, it’s all because of context. The LLM networks were trained using self-attention to focus on the most relevant context. The mechanics of how it works are too much for a Monday email, but if you want to read more see the paper, Attention Is All You Need which is key in how we got to the current surge in generative pre-trained transformer (GPT) technology. That approach was used to train these models on massive amounts of written text and code. Something interesting began to emerge. Hyper-dimensional attributes formed. LLMs began to understand logic, syntax and semantics. They began to be able to provide logical answers to prompts given to them, recursively completing them one word at a time to form an intelligent thought.

Back to my experiment… Once a language model is trained, the read-only model can be used to answer prompts, including questions or conversations. There are many open source versions out there on platforms like Huggingface. Companies like Microsoft, OpenAI, Meta and Google have built their own and sell or provide for free. I downloaded the free Llama 2 Chat model. It comes in 7, 13 and 70 billion parameter models. Parameters are essentially the variables that the model uses to make predictions to generate text. Generally, the higher the parameters, the more intelligent the model. Of course, the higher it is, the larger the memory and hardware footprint needed to run the model. For my case, I used the 7B model with the neural net weights quantized to 5-bits to further reduce the memory needs. I was trying to fit the entire model within the GPU’s VRAM. Sadly, it needed slightly over the 6GB I had. But I was able to split the neural network, loading 32 of the key neural network layers into the GPU and keeping the rest on the CPU. With that, I was able to achieve 14 tokens per second (a way to measure how fast the model generates words). Not bad!

I began to test the model. I love to test LLMs with a simple riddle*. You would probably not be surprised to know that many models tell me I haven’t given them enough information to answer the question. To be fair, some humans do to. But for my experiment, the model answered correctly:

> Ram's mom has three children, Reshma, Raja and a third one. What is the name of the third child? The third child's name is Ram.

I went on to have the model help me write some code to build a python flask based chatbot app. It makes mistakes, especially in code, but was extremely helpful in accelerating my project. It has become a valuable assistant for my weekend coding distractions. My next project is to provide a vector database to allow it to reference additional information and pull current data from external sources.

I said this before, but I do believe we are on the cusp of a technological transformation. These are incredible tools. As with many other technologies that have been introduced, it has the amazing potential to amplify our human ability. Not replacing humans, but expanding and strengthening us. I don’t know about you, but I’m excited to see where this goes!

Stay curious! Keep experimenting and learning new things. And by all means, keep writing. Keep thinking. It is what we do… on to the next word… one after the other… until we reach… the end.

- TinyLLM – Instructions on how I hosted the Llama 2 model on the small hardware: https://github.com/jasonacox/TinyLLM

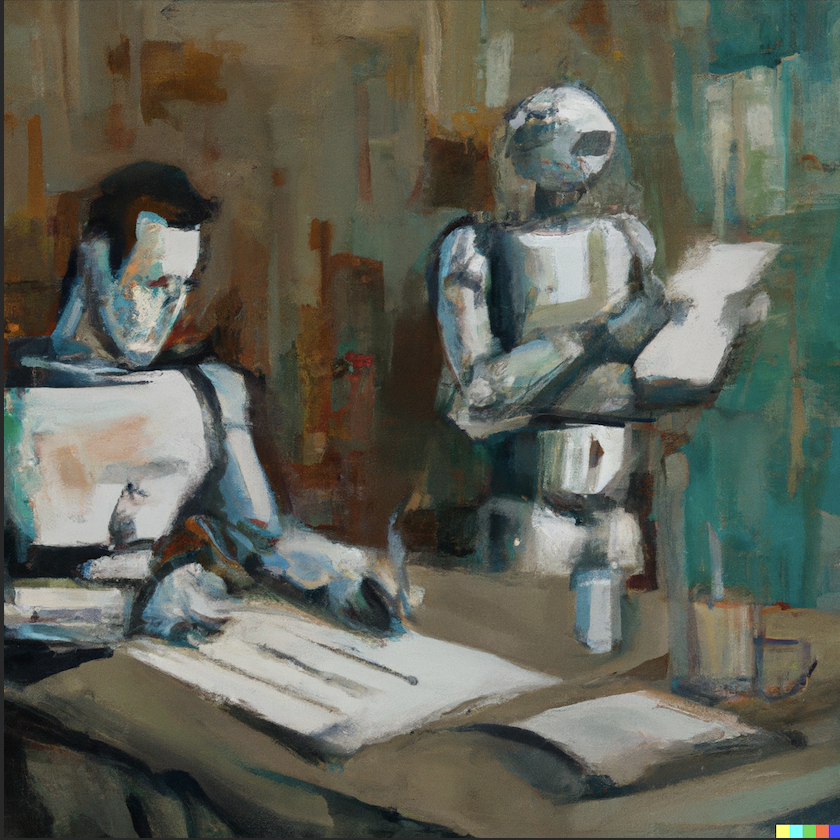

- Artwork generated by OpenAI DALL-E 2.

- Credit for “Ram’s mom” riddle to my good friend, Tapabrata “Topo” Pal